AI Tools

Coverage of the tools, platforms, and developer ecosystems shaping how AI is built and deployed.

Anthropic announced Claude Design, a research preview that lets subscribers use Claude to generate presentations, prototypes and slides, according to Engadget. The app is powered by a system Anthropic calls Opus 4.7 and is available on Pro, Max, Team and Enterprise plans. It arrives the same week Adobe and Canva unveiled their own visual AI assistants.

Anthropic Launches Claude Design for Generating Prototypes and Slides

Anthropic introduced Claude Design, an experimental product that generates visuals such as prototypes, slides, and one-pagers from text prompts, according to TechCrunch. The company says it targets founders and product managers without design backgrounds. Users describe what they want and can refine outputs through direct edits or follow-up requests.

Google Adds Google Photos Integration to Gemini App Image Generation

This article is based on a single primary source and has not been independently corroborated. DeepBrief is monitoring for additional confirmation.

NVIDIA Details Dynamo Stack for Agentic Coding Inference

NVIDIA has published a technical post on NVIDIA Dynamo, its inference framework aimed at the serving demands of coding agents and other agentic AI workflows. The post, published on the NVIDIA Developer Blog, frames Dynamo as a response to rising production use of agent-generated code at companies including Stripe, Ramp, and Spotify. NVIDIA opens its post with a set of third-party adoption figures intended to establish the scale of agent-driven code generation.

AWS Adds Per-Principal Cost Attribution to Amazon Bedrock

Amazon Web Services announced granular cost attribution for Amazon Bedrock inference, a feature AWS says automatically assigns inference costs to the IAM principal that made each API call. The change is described in a post on the AWS Machine Learning Blog dated April 17, 2026. According to AWS, attribution flows into AWS Billing across models with "no resources to manage and no changes to your existing workflows.

Cloudflare Positions AI Gateway and Workers AI as Unified Inference Layer

Cloudflare announced an expansion of its AI platform that routes calls to over 70 models from more than 12 providers through a single API, adds custom metadata for cost tracking, and previews a "bring your own model" path via Replicate's Cog containers. The company frames the update around agent workloads sensitive to latency and provider reliability.

Cloudflare Says Its Unweight System Compresses LLM Weights 15–22% Losslessly

Cloudflare has published details of *Unweight*, a lossless compression system the company says reduces large language model weights by 15–22% on Llama-3.1-8B while preserving bit-exact outputs and requiring no specialized hardware. According to Cloudflare, the technique works by compressing the exponent bytes of BF16 weights using Huffman coding and decompressing them inside GPU on-chip memory before feeding the results directly to tensor cores.

OpenAI Unveils Rosalind Life Sciences Model With Restricted Access

OpenAI has introduced a life sciences model called Rosalind that the company positions as a tool for accelerating drug discovery, according to a report published by Decrypt on April 18, 2026. Decrypt reports that the model is not broadly available to developers or the public, and that access is limited to select partners. OpenAI's specific criteria for access, pricing, and commercial terms were not disclosed in the Decrypt report.

Zoom Adds World ID Verification to Flag Human Participants

Zoom has integrated World's Deep Face biometric verification into its video meetings, according to The Next Web, letting participants display a "Verified Human" badge by matching live video against iris scans captured by World's Orb device. The feature is aimed at deepfake impersonation attacks on corporate video calls.

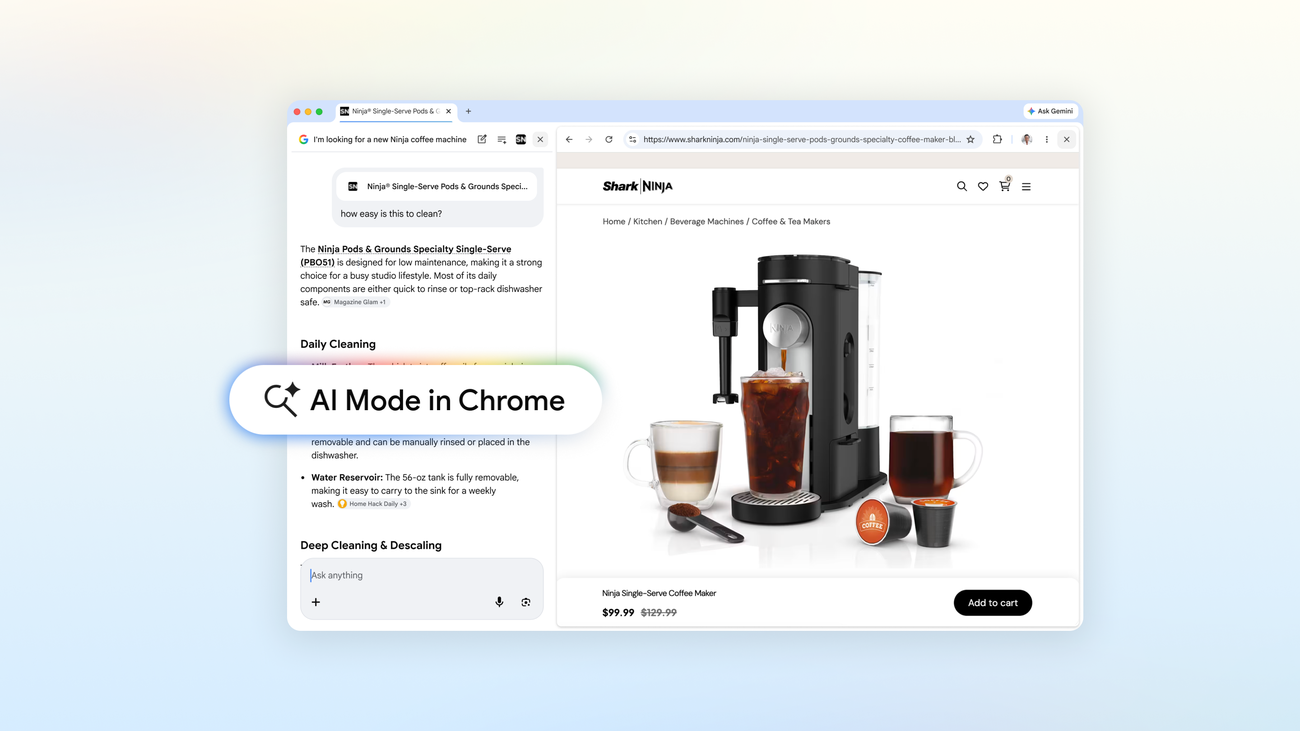

Google Expands AI Mode Inside Chrome With New Browsing Features

Google announced updates to AI Mode in the Chrome browser, describing changes to how users can query, summarize, and navigate web pages from within the browser interface. The company published the details in a post on its Keyword blog dated April 16, 2026. According to the Keyword post, the update positions AI Mode as an integrated way to "explore the web" directly from Chrome, rather than through a separate Search surface.

NVIDIA DeepStream 9 Adds Coding Agent Integration for Vision AI

NVIDIA has released DeepStream 9, a version of its vision AI SDK that integrates with AI coding agents including Claude Code and Cursor to generate pipeline code, according to a post on the NVIDIA Developer Blog. The company says the integration is aimed at reducing the setup work required to build real-time vision AI applications. In the blog post, NVIDIA describes building real-time vision AI applications as a process that typically requires "intricate data pipelines, countless lines of code, and lengthy development cycles.

AWS Launches Automated Reasoning Checks in Amazon Bedrock for AI Compliance

Amazon Web Services has detailed a feature called Automated Reasoning checks in Amazon Bedrock, which the company describes as applying formal verification methods to validate outputs from generative AI models. According to the AWS Machine Learning Blog post announcing the capability, the checks are intended to produce what AWS calls "mathematically proven" results, positioned as an alternative to probabilistic guardrails used elsewhere in the industry.

AWS Details Video Semantic Search on Nova Multimodal Embeddings

Amazon Web Services published a technical walkthrough describing how developers can build a video semantic search system on Amazon Bedrock using its Nova Multimodal Embeddings model, which the company says processes text, documents, images, video, and audio into a shared vector space. The post, published on the AWS Machine Learning Blog, includes a reference implementation on GitHub and an architecture diagram covering ingestion and query pipelines.

Stay informed

Get DeepBrief delivered to your inbox.