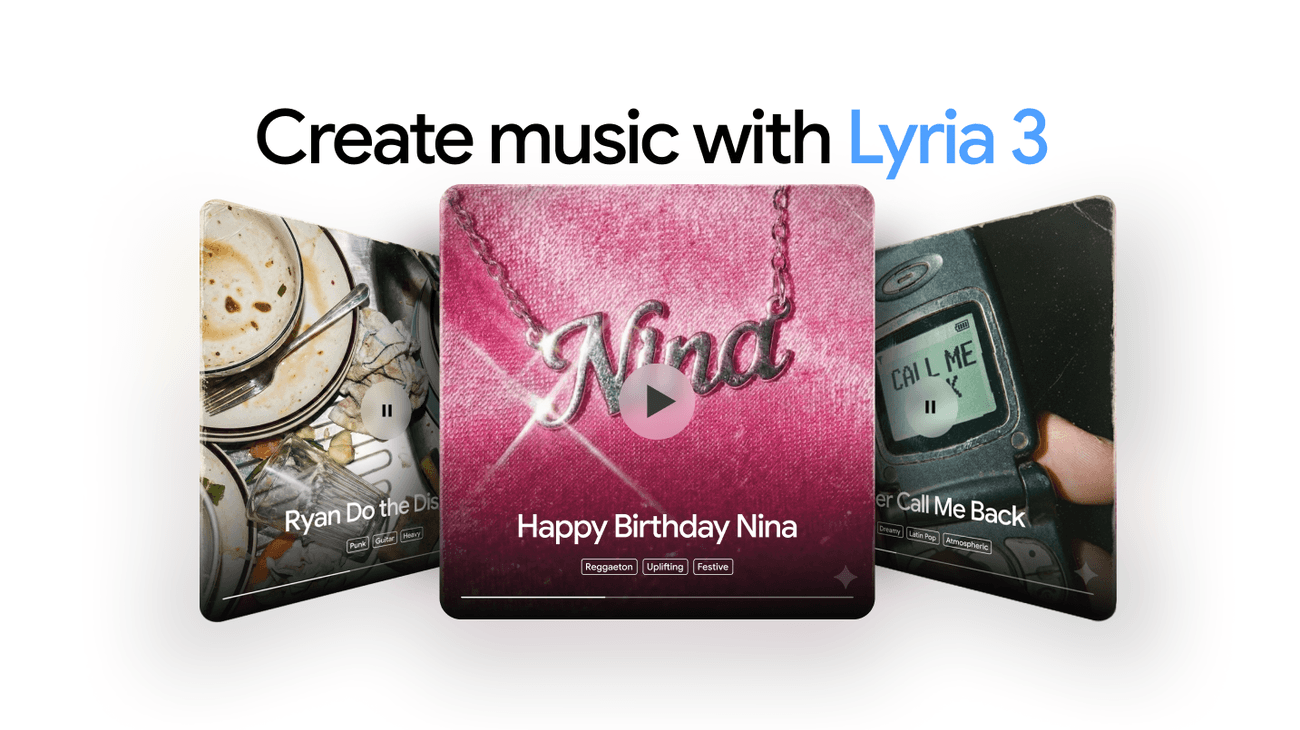

Google DeepMind has launched music generation inside the Gemini app, powered by Lyria 3, the company's most advanced music model, enabling any user to produce 30-second audio tracks from text prompts or image inputs.

Editor's Note: This article is based on an official announcement from the source organization. Claims regarding performance, benchmarks, and capabilities have not been independently verified.

The move brings AI music creation into one of the world's most widely used AI assistants, rather than keeping it confined to a standalone product or developer API. Google has been developing the Lyria model family for several years, with earlier versions used in experimental tools like MusicLM and YouTube's Dream Track feature for creators. Lyria 3 represents the current peak of that research, now deployed at consumer scale.

Lyria 3 is Google's clearest signal yet that AI-generated audio is no longer a research curiosity — it's a core feature of its flagship AI product.

From Research Lab to the Gemini App

Integrating Lyria 3 into Gemini is a significant product decision. Rather than launching a dedicated music app, Google has chosen to embed generation capability directly into a general-purpose assistant — the same interface people use to write emails, summarize documents, or analyze images. This positions music creation not as a specialist activity, but as a natural extension of everyday AI interaction.

The dual input mechanism — accepting both text and images — is notable. Users can describe a mood or genre in words, or feed an image and allow the model to interpret its visual tone into sound. That image-to-music pathway opens creative possibilities that text-only tools cannot match, letting a photograph of a rainy street or a piece of artwork become a musical prompt.

What Lyria 3 Actually Produces

The output format is 30-second tracks, which positions the tool firmly in the territory of social content, short-form video scoring, mood sketches, and creative experimentation. Thirty seconds is long enough to be musically meaningful and short enough to generate quickly without significant compute cost per request.

Google has not publicly disclosed the technical architecture of Lyria 3 beyond describing it as the company's most advanced music generation model. Specific details around genre range, audio quality (sample rate, bitrate), and the degree of stylistic control available to users have not been fully detailed in the initial announcement. Whether users can specify instruments, tempo, key, or structure beyond freeform text description remains unclear from the available information.

The competitive context matters here. Suno and Udio have built substantial user bases specifically around AI music generation, offering longer track lengths, vocal synthesis, and iterative editing features. Google's advantage is distribution: Gemini already sits in front of hundreds of millions of users, meaning Lyria 3 does not need to win a product comparison — it simply needs to be good enough to satisfy curiosity and creative need at the point of request.

Availability and Access

Google has not specified whether Lyria 3 music generation is available to all Gemini users globally or restricted to specific subscription tiers or regions at launch. The company has not announced pricing for the feature as a standalone capability, and it is not currently available as a public API for developers building third-party applications — though Google's broader AI ecosystem suggests API access could follow.

For developers and professional creators, the absence of an API at launch limits immediate workflow integration. Audio producers, game developers, and video creators who want to pipe music generation into their own tools will need to wait for broader programmatic access. The current release is consumer-facing first.

The Broader Shift in Multimodal AI

This launch is part of a clear pattern across major AI labs: extending flagship models into audio and music as the next frontier after text and image generation reached maturity. OpenAI has explored audio generation through its GPT-4o voice capabilities, and Meta has released AudioCraft as an open-source framework for researchers. Google's approach — embedding music creation in Gemini rather than releasing open weights or a standalone app — reflects a product-first, ecosystem-retention strategy.

The image-to-music capability in particular signals an ambition to make Gemini genuinely multimodal in both input and output, not just capable of understanding multiple formats but generating across them. A user could theoretically move from image analysis to music creation to text summarization within a single Gemini session without switching tools.

For non-technical users, the barrier to making something that sounds musical has just dropped significantly. No knowledge of DAWs, music theory, or sound design is required. The creative entry point is a sentence or a photograph.

What This Means

For everyday users, Gemini now offers a fast, low-friction path to original music for personal projects, social content, or creative exploration — and for Google, it deepens the case for keeping users inside its AI ecosystem rather than reaching for specialist tools.